Two things can be true about vendor evaluations:

- They’re important, so it’s worth our time as AR leaders to explore ways to improve our companies’ placements in them.

- They keep us from tending to other important parts of our job, so we have a responsibility to cap the amount of time we spend on them.

The only way to do better in evaluations while reducing (or at least keeping flat) the time you spend on them is to use AI. If you work with our agency or follow me on LinkedIn, you might’ve picked up on my obsession with finding new ways to apply AI to the evaluation process.

This is personal to me. I believe there’s a lot at stake here. The number of evaluations in your industry is probably growing; your AR team probably isn’t, or at least not proportionally. Meaning there’s risk that evaluations will completely swallow your available time, keeping you from doing the proactive, strategic, interesting work that must be done in order for AR to make the impact it’s capable of making.

I’m on a mission to help AR practitioners step off the evaluation hamster wheel. Along the way, I’ve uncovered lots of ways to use AI throughout the process of working on an MQ/Wave/etc. Some of my favorites are listed below, ranked in order of how desperately I want you to try them (#1 being the one I’m most desperate for you to try).

Give them a shot, and then let me know how it goes – gwind@arinsights.com.

1. Building your fact check case

Stage: When the analyst shares the draft of the report

What you give the AI tool:

- All the materials you submitted to the analyst

- The draft of the report

- The changes you’d like the analyst to make

- The process dictated by the authors for corrections or methodology documents from the research firm

- Context on previous history of fact check requests with the authors and firm

What it gives you:

- An analysis of which desired changes have strong evidence (and which don’t)

- Evidence to support your desired changes (and a list of other changes that would be beneficial and are supported by your submission)

- A fact check response that is consistent with format mandated by the firm, likely to convince the analyst, and fully supported by evidence from your submission

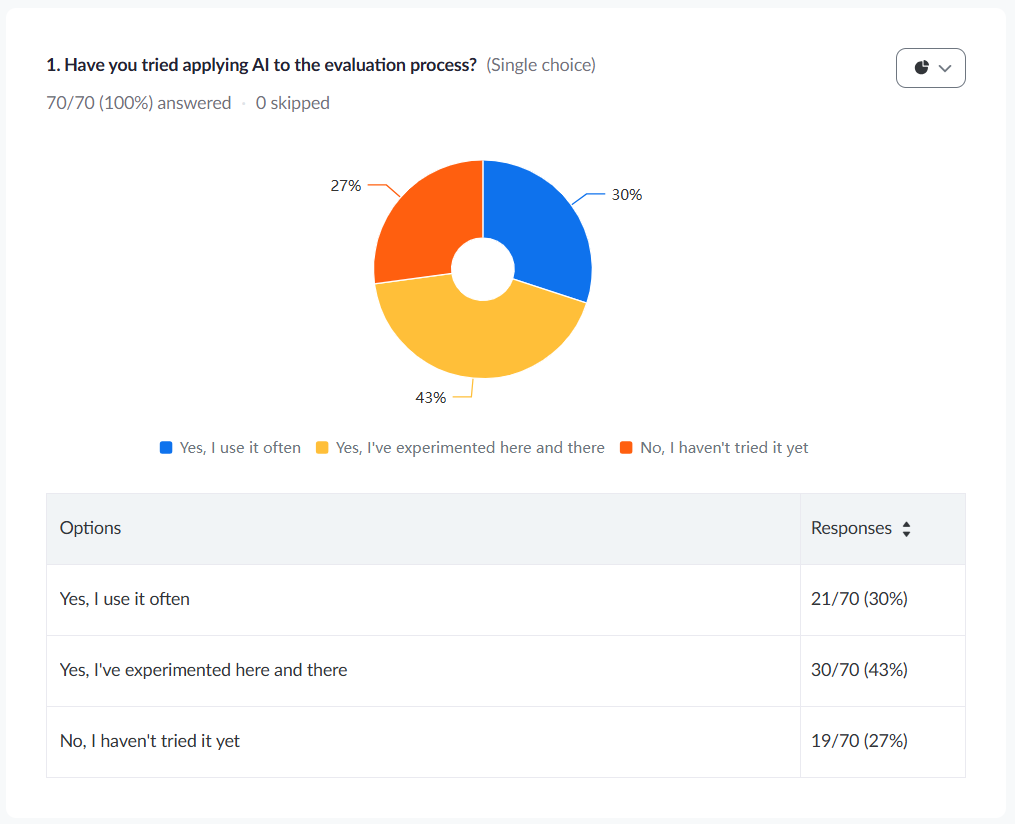

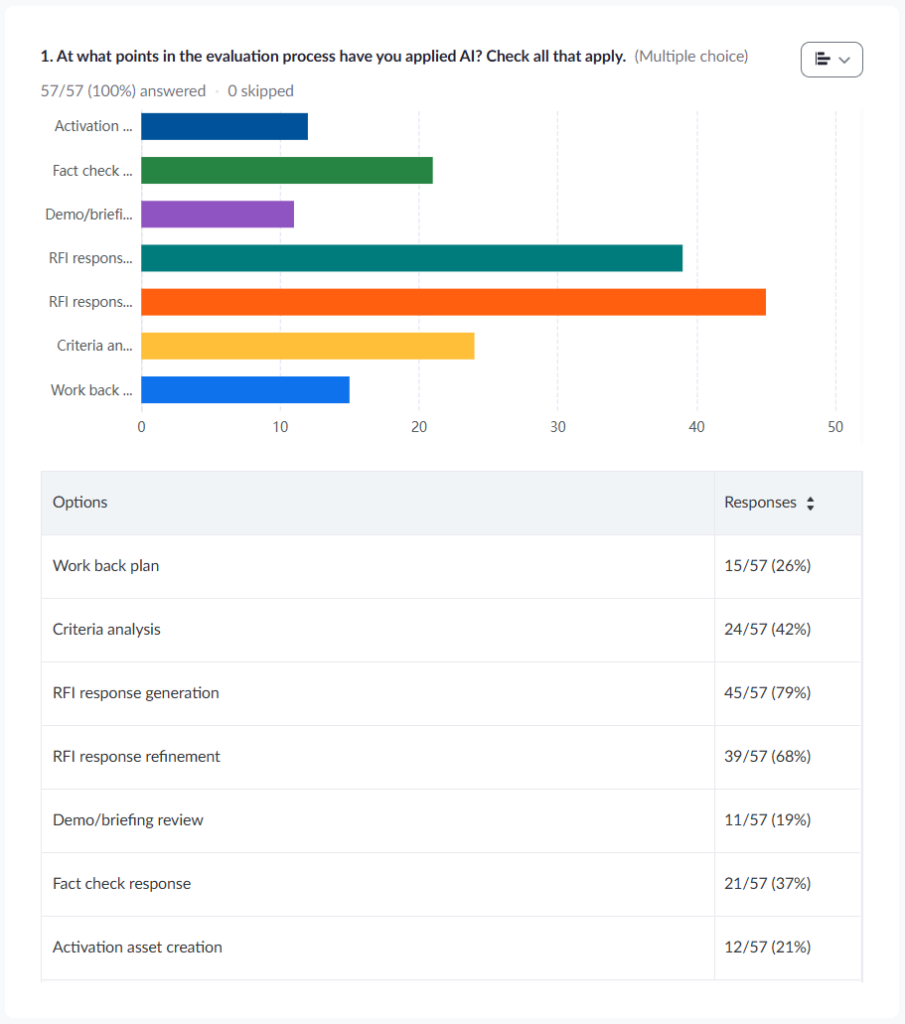

Poll data: During a March 2026 webinar, we asked the AR practitioners in attendance how they’d tried using AI in the evaluation process. Of the 57 attendees who answered the poll, 21 (37%) said they’d tried using AI at the fact check stage.

2. Making it easy for SMEs to make their contributions

Stage: When you receive the survey from the analyst

What you give the AI tool:

- Blank survey

- Answers to questions from previous surveys

- Product documentation

- Financial data

- The welcome packet/research summary/premise document

- Insights from the author team, blog posts, social media posts, and other documentation of what will resonate with the analysts

What it gives you:

- A partially populated response to the survey

- Answer guides for SMEs

- Drafts of emails to send to SMEs with targeted, focused requests

Poll data: Of the 57 AI users who answered our webinar poll, 45 (79%) said they’d tried using AI to generate RFI responses.

3. Refining your responses to the survey

Stage: When you have a complete first draft of your survey response (with SME contributions incorporated into it)

What you give the AI tool:

- Complete draft response to the survey

- Criteria from the welcome packet

- Description of your placement in the previous report

- Competitive intelligence

What it gives you:

- Suggestions for how you can improve answers to certain questions

- An analysis of your competitive gaps

- Predicted scores

Poll data: Of the 57 AI users who answered our webinar poll, 39 (68%) said they’d tried using AI to refine RFI responses.

4. Punching up your briefing and demo

Stage: Between submitting your survey response and presenting your briefing and demo

What you give the AI tool:

- Demo/briefing guidance from the analysts

- Your demo script

- Your briefing materials

- Your final responses to the survey

What it gives you:

- An analysis of how your demo aligns with your survey responses

- Suggestions for how to improve your demo

Poll data: Of the 57 AI users who answered our webinar poll, 11 (19%) said they’d tried using AI to improve their briefing/demo.

5. Decoding the evaluation criteria

Stage: When you receive the welcome packet from the analyst

What you give the AI tool:

- The welcome packet

- The survey questions and demo/briefing guidance

- A reprint copy of last year’s report

- Biographical information about the analyst, insights from the authors, etc.

- Information about your competitors’ recent announcements

What it gives you:

- A preliminary estimate of where you’ll be placed in the final report

- Language to help you set placement expectations with your executives

- Positioning opportunities and risks to be aware of in your response

Poll data: Of the 57 AI users who answered our webinar poll, 24 (42%) said they’d tried using AI to decode evaluation criteria.

6. Drafting activation assets

Stage: Between your receipt of the final report and actual publication of the final report

What you give the AI tool:

- The final report

- The analyst firm’s citation rules

- Your brand voice guidelines

- Examples of previous activation assets

What it gives you:

- Outlines/drafts of LinkedIn posts, blog posts, press releases, landing pages

Poll data: Of the 57 AI users who answered our webinar poll, 12 (21%) said they’d tried using AI to draft activation assets.

7. Creating your engagement plan

Stage: Between publication of the report and kick-off of the next cycle

What you give the AI tool:

- The final report

- Report criteria

- All the materials you submitted to the analyst

- Feedback you received from the analyst

- Information about your company’s internal response to the report

What it gives you:

- A plan for future interactions with the analyst to influence future report criteria and show progress against your strengths and weaknesses

Poll data: Of the 57 AI users who answered our webinar poll, 15 (26%) said they’d tried using AI to create engagement plans.

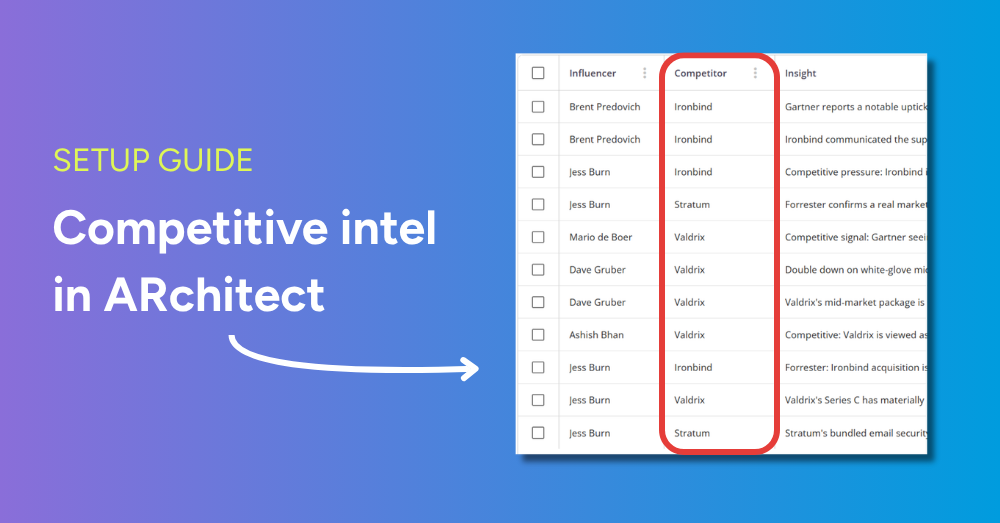

My team and I are here to help

Our agency offers a service called RFI Accelerator. Using your approved AI tools and our tested prompts and workflows, we’ll make your evaluation submissions stronger and reduce your manual workload. You can request an exploratory call here.

Appendix: Results of our March 2026 webinar polls